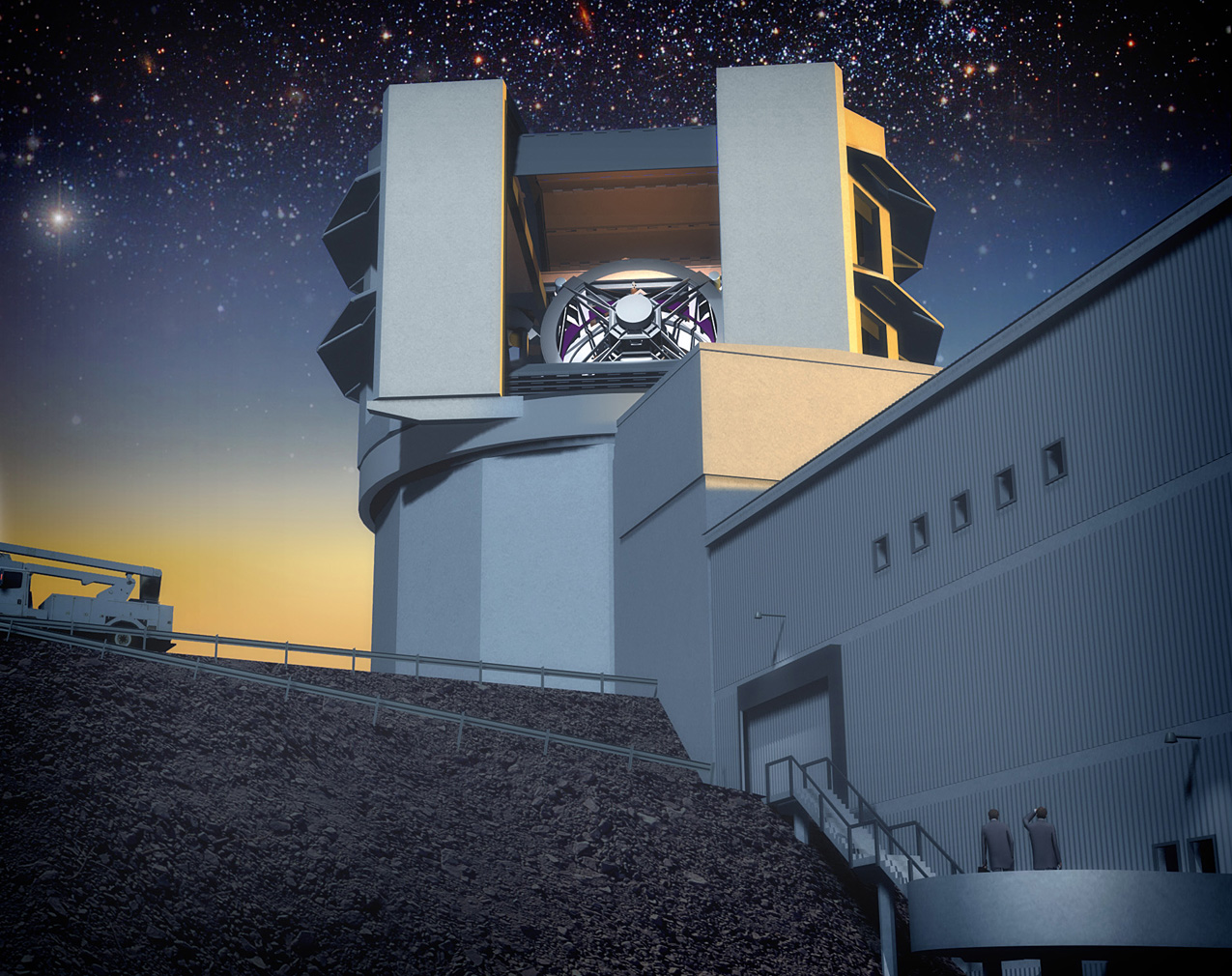

I would consider myself an observational astronomer, yet most of the data I work with on a daily basis was not collected by me. It was collected instead by a telescope methodically combing the sky under the dark skies of New Mexico. This telescope is the workhorse of the Sloan Digital Sky Survey (SDSS), and it has fundamentally changed the way astronomers do business. In doing so, it has launched a digital revolution in data handling and brought the issue of data mining front and center in our field.

What is data mining exactly? The Wikipedia definition: “Data mining, a branch of computer science, is the process of extracting patterns from large data sets by combining methods from statistics and artificial intelligence with database management.” In astronomy, the employment of these techniques is morphing into a sub-field in its own right, Astroinformatics.

Why do we need to think about this? The SDSS has become an amazing tool for astronomers studying the evolution and environment of galaxies, distant objects like quasars, and more nearby objects like dark satellites around our Milky Way to name a few. Sloan has provided over a million spectra and hundreds of millions of photometric observations. The survey has given us the largest picture yet of our sky we have studied for millenia. As we gear up for the next generation of surveys, the Pan-STARRS and Large Synoptic Survey Telescope (LSST) will pull in PETABYTES of data and probably more over their lifetimes! To highlight a few challenges facing the LSST effort in particular:

- Each image is taken in 15 seconds and will be on the order of several Gigabytes. This must be read out in several seconds. Repeat for science validation.

- Process and catalog up to 100 million sources in that pair of images, and send out worldwide alerts within a minute of transient (changing with time) events like supernovae, asteroids, etc.

- Generate up to 100,000 alerts per night and up to 2000 images EACH NIGHT = 30 Terabytes of information EACH NIGHT = the entirety of the SDSS EACH NIGHT! This would fill 40,000 CD’s with data… you guessed it… EACH NIGHT!

- Move data from South America where the telescope will be located to the US daily.

- Do this for 10 years and allow for easy database access worldwide

This works out to some 50 billion objects that will need to be classified, and a lot of them will need to be monitored for real-time variations. You can begin to see why this is a problem that needs tackling.

What are the current strategies? One strategy that has already proven to be effective and very useful is citizen science. Galaxy Zoo is an example of how the public can use their passion for space to classify galaxies in a fun environment where they feel they are making a real contribution to science. But this isn’t enough. There are not enough people in the world to classify and prioritize all the billions of objects telescopes like LSST will observe.

The success of LSST and other future surveys dealing with large data sets will hinge on our ability to create pipelines of code that will decide for us how objects should be classified and sort the information in real-time into searchable databases that astronomers can access from anywhere. Luckily, this is not a new problem. The field of biology encountered this problem when work on mapping the human genome began. Surveying the DNA sequences and mapping how variations lead to different human conditions like disease susceptibility is no small undertaking. Too much data is a good problem to have, but unless better algorithms are created to work with the larger data sets, we are no better off. Time to become even better friends with the computer science and statistics departments!

How does this affect your career? When you begin research, one of the most precious things to you is your data. Collecting your own data is a time intensive process from submitting a telescope proposal that may not be accepted, to learning how to take the observations, traveling to the scope (in some cases), and finally reducing the data you collect into a form easy to work with. It is no wonder surveys like the SDSS and online databases and services offered by the National Virtual Observatory are so popular. There are no proposals, the data is of scientific quality and already reduced, and depending on your field of study, you have all the data you need on your hard drive, in less than a couple minutes, to complete a research project. While this will probably not suffice for a thesis project that will require other observations of specific objects, it is a perfect way to get your hands dirty in the science and a great supplement to any study. The next generation of surveys will enable even more science to be performed solely from a download file, but whether that is possible or not relies on our ability to make the data useful, searchable, and downloadable. Welcome to the world of Astroinformatics!

Want to read more? I have by no means gone into any detail here, so for a more complete diagnosis of the challenges of data management in science, check out this slightly advanced, but very interesting book on the subject, The Fourth Paradigm. More for free on the blog here. Also check out the evolving astrobites article: Databases of Astronomy for more on other databases in the field. Finally, make sure to read through the science books chock-full of information on the LSST and Dark Energy Survey (DES) sites.

great post. “Time to become even better friends with the computer science and statistics departments!” — especially important to understand our field as cross-disciplinary!

One would probably also do well to take charge of structuring one’s undergraduate and graduate training to include courses in scientific computing and statistics since it is not (yet) common place to have such courses integrated by design. The added bonus is that your data mining amended astronomy training would broaden your career options down the road.

The Virtual Observatory is far more than just an “online database”. The VAO (http://www.usvao.org) is working on providing data AND services – SED builders, crossmatchers, time series analysis, etc. this year and data mining tools in future years.

Hi Matthew! Thanks for pointing that out. I have reworded the article accordingly to not make it sound like the database is the VAO’s only service.

That’s a tall order Matthew. I’d rather see the VAO as an efficient “google for astronomy” or sorts first. If you can’t find what’s out there, you won’t be able to do much with it…

Although SDSS observing is systematic, the primary (2.5m) SDSS telescope is not robotic; there was (is) an observing staff that plays an essential role. The 0.5m PT, used for photometric calibration, is arguably fairly well automated, but even this telescope requires human attention to begin and end observing.

Hi Eric! You’re right, I was thinking of the companion which is automated. Wording has been fixed.