Title: Forecasting cosmological parameter constraints from near-future space-based galaxy surveys

Authors: Anatoly Pavlov, Lado Samushia, Bharat Ratra

First Author’s Institution: Department of Physics, Kansas State University

Dark Energy and the Accelerating Expansion of the Universe

The 2011 Nobel Prize in physics was awarded to the team that uncovered evidence for the accelerating expansion of the universe. We’ve known that space is expanding since the work of Hubble in the 1920s, but the recent discovery by Perlmutter, Schmidt, and Riess that it is expanding faster now than it was in the past, is a shock.

This is weird! If anything, we expected the expansion of space to be decelerating due to the gravitational pull of all the matter and radiation in the universe. That is to say, neither radiation nor normal matter (or even dark matter) could be responsible for speeding things up. We might rightfully ask if there’s anything in the established theories which could account for this.

In fact, there is room in the standard, general relativistic picture of the universe for what’s known as the cosmological constant, Λ. It enters the theory in a way analogous to a constant of integration, so in some sense it comes “for free” without having to postulate new matter or rewrite relativity. It behaves like a negative energy density and therefore would inflate the universe in the accelerating manner we see. What’s more, it’s truly a constant of the universe, not a form of matter, and doesn’t need to be explained by new particles or fields.

All of these are reasons why cosmologists favored Λ following the discovery of the accelerating expansion of the universe, and it is the most widely-accepted cosmological model to date (for more information, see this astrobite). It’s the simplest and arguably the most elegant way to match our theory to observations. It isn’t the only possible explanation, though: we could also posit the existence of some new kind of particle or field that has a negative energy density, or we could even modify our theory of gravity on large scales. In fact, the reality might be some combination of any of these. So how might we differentiate between the different theories, and get a clearer picture of dark energy?

“Constraining Parameters” is the Name of the Game

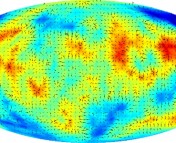

All the structure we see in the universe today came about through the gravitational collapse of dense regions of the early universe. The simplified picture is that when the universe was young and small, quantum fluctuations led to some regions having a higher-than-average density while others were of lower-than-average density.

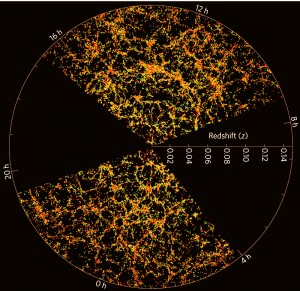

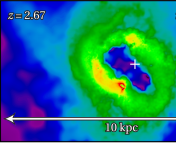

The large-scale distribution of matter in the universe, from the Sloan Digital Sky Survey. Note the characteristic amount of "foaminess."

Expansion of the universe has the effect of pulling apart the over-dense regions and therefore smoothing out these fluctuations, fighting gravitational attraction which causes matter to clump, in a cosmic tug-of-war. For over-dense regions above a critical size (known as the Jeans length), gravity will win out and the matter will collapse to form clumps and eventually galaxies. These galaxies in turn clump up to form clusters, again fighting against the expansion trend.

From this we can see that the formation of structure in the universe depends on the expansion history of the universe and therefore its dark energy content. Different models of dark energy, described by different parameters (variables), will describe different arrangements of the large-scale structure of the universe. The aim of this paper is to make these predictions concrete, show how they can be tested, and anticipate what we’ll learn with the next generation of space telescopes.

What do we mean by “constraining parameters”? As an example, consider the model representing dark energy with the cosmological constant, Λ (known as the ΛCDM model, for Λ + Cold Dark Matter). There is nothing in our theory which fixes the value of the energy density of Λ— we have to determine it by measurement. If, for example, its energy density was of the order of 10 giga electron volts per cubic meter, the universe would have ripped itself apart (expanded rapidly) before the earth was ever formed, and we wouldn’t see many other galaxies in the sky. Therefore we say the parameter is constrained to being at least smaller than that, by observation. More accurate measurements can constrain this value even further. This astrobite discusses three papers on different methods of constraining models, and this astrobite covers the interesting question of how much we can say about our cosmology based just on the fact that we’re around to observe it.

The Near Future of Dark Energy Research

The authors consider mainly five different models of dark energy. There’s the aforementioned ΛCDM model, which is by far today’s favorite. In such a model, Λ is a constant of the universe, representing a fixed energy density. The other models (XCDM, ωCDM, and ϕCDM) allow this energy density to change with time, and therefore cannot be described by a cosmological constant and must instead be new forms of matter or fields. The authors also consider briefly the implications of a modified theory of gravity.

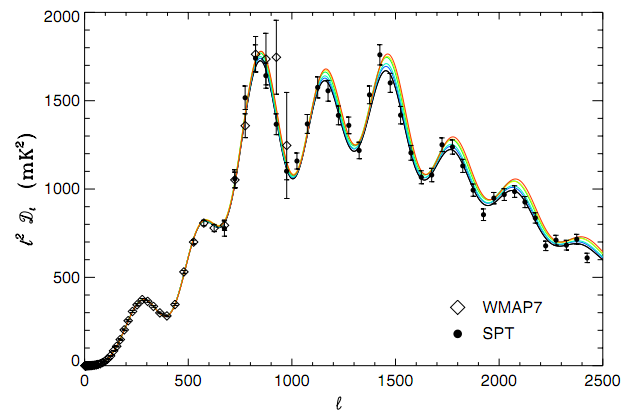

An example of the galactic power spectrum, as it has been measured to date. Fluctuations in density are seen to be larger at larger length scales (toward the left of the plot.)

As mentioned before, the form dark energy takes will affect the final large-scale structure in the universe. Therefore, if we can accurately measure the large-scale structure, we can constrain those dark energy models. The way this has been done in the past is with a galaxy power spectrum which quantifies statistically how galaxies are clumped together in the sky. If there was absolutely no clumping and galaxies were arranged randomly throughout the universe, the power spectrum would be flat (and cosmologists would have a much easier job!) One constructs the power spectrum by essentially quantifying the probability that any given galaxy will have a neighbor galaxy within a radius r, versus the probability for a totally random distribution. The power spectrum is simply the Fourier transform of this correlation function.

In order to do this, you need a good telescope, a large portion of the sky to find lots of galaxies, and a lot of time in order to find the dimmer galaxies. You also need to know galactic redshifts, which indicate distance: otherwise, you wouldn’t be able to tell if two galaxies really are close to each other, or if they just appear to be from your point of view. There are at least two upcoming space telescope missions that fit the bill: WFIRST, NASA’s Wide-Field Infrared Survey Telescope, and the European Space Agency’s Euclid telescope. These telescopes will allow us to look at the evolution of structure at deeper redshifts and therefore probe earlier epochs of the universe.

Once you know the scope of your galactic survey and the abilities of your telescope, you can mathematically assess the precision of your statistics, or the quality of the resulting power spectrum you’ll be able to construct. The authors use a method known as the “Fisher matrix formalism,” described in appendix A of the paper. The survey specifications are taken from the mission descriptions of WFIRST and Euclid. From this, they can then tell how strongly the power spectrum will be able to constrain the parameters of the various cosmological models.

Conclusion

The authors find that these new measurements should be able to constrain most of the models’ parameters to within roughly 10%, which is remarkable. The ϕCDM model in particular, having fewer free parameters, is more tightly constrained— it has less wiggle room to fit the data.

This is very good news for cosmologists. The authors write:

… data from an experiment of the type we have modelled will be able to provide very good, and probably revolutionary, constraints on the time evolution of dark energy.

These data could also shed some light on the ongoing debate over modified gravity theories as alternatives to dark matter and dark energy. All of this means that in the near future, we will be better able to say which theories fit the data and which should be discarded, which is at the heart of scientific exploration.

Trackbacks/Pingbacks