Title: The Physics GRE does not help applicants “stand out”

Authors: Nicholas T. Young and Marcos D. Caballero

First author’s institution: Department of Physics and Astronomy & Department of Computational Mathematics, Science, and Engineering, Michigan State University

Status: Open access on arXiv

If you’re talking about diversity, equity, and inclusion in a U.S. physics and astronomy program, chances are the Physics Graduate Record Exam (a.k.a. the Physics GRE or “PGRE”) will come up. The Physics GRE is a standardized test that has been used for over 35 years to showcase to graduate admissions committees how much fundamental physics an undergraduate knows. However, it usually fails in the endeavor to show off an undergrad’s ability to do physics. For the past few years, removing Physics GRE (PGRE) requirements for admission to graduate school has been a point of contention in many university physics departments. The American Astronomical Society, the American Association of Physics Teachers, and the American Physical Society’s Bridge Program have all issued recommendations to limit the use of GREs in admissions, and many studies have looked into the effectiveness and bias of these standardized exams. There was also recently an Astro2020 white paper calling for astronomy programs to eliminate both the subject-specific Physics GRE and the general GRE.

Research done by both universities and external agencies has shown that the Physics GRE is harmful to marginalized students. With its $150+ price tag (which just adds to the growing costs of grad school admission), the PGRE can be a barrier to entry to graduate school for lower income students. It’s even been shown to be biased against women, people of color, and students from lower socioeconomic status; in fact, it’s a better indicator of these demographic characteristics than of an applicant’s potential for success in graduate school. Studies have also shown that these exams do not correlate with the likelihood of finishing a doctorate, or the likelihood of earning a prize postdoctoral fellowship.

With all these strikes against it, why do departments still use it? Some claim that it helps students from smaller schools “stand out” and allows for comparison of students from very different backgrounds since it’s standardized, unlike GPA or research experience. It also makes the job of admissions committees easier, since it can quickly narrow down large applicant pools; some schools use a “cutoff score”, which requires a minimum score in order to be a competitive candidate. Of course, some set-in-their-ways faculty will claim that it’s a good indicator, and a good physicist should be able to excel at these exams.

According to today’s paper, over 90% of physics and astronomy programs in the U.S. still normally require or recommend the Physics GRE, despite the recommendations against it. (All the programs that do not accept PGRE scores are either astronomy or a joint program with physics and astronomy). Thankfully, the list of schools in the U.S. and Canada that no longer require the PGRE has been growing rapidly. It’s important to note that these policies have been drastically changed for 2020, since the COVID-19 pandemic caused ETS (the company that runs the GREs) to cancel administrations of this year’s exams. These are exceptional circumstances though, and it is unclear if graduate programs will revert to their previous policies once circumstances change. Today’s paper focuses on yet another reason to ditch the PGRE for good, taking down the myth that it evens the playing field by helping applicants “stand out.”

Let’s Look at the Data

This paper looks at two years of data from five physics departments, with the goal of determining how admissions rates vary based on PGRE scores and other factors (GPA, size and “selectivity” of undergraduate institution, etc.). These five physics departments are from “selective, research-intensive, primarily white” institutions, four of which are public schools. All of these departments have recorded their shortlist, admitted, and accepted applicants, with data on each applicant for undergraduate GPA, GRE scores, undergraduate institution, and demographic information. Only domestic students are looked at in today’s study. They categorized undergraduate institutions by size (using the number of physics bachelors awarded each year according to the American Institute of Physics) and selectivity (using Barron’s selectivity index to label them as Ivy League, most selective, highly selective, selective, or non-selective).

Intuitively, it makes sense that all these factors (GPA, undergrad institution, GRE score) could be related, so this paper seeks to disentangle that by looking at “mediating relationships” and “moderating relationships”. A mediating relationship means that two variables are related only because they’re related to the third variable; for example, you stay up late playing video games, so you lose sleep, which leads to poor exam performance the next day. Video games are only related to your exam via sleep. In a moderating relationship, the strength of the relationship between two variables depends on a third variable; the likelihood of owning a dog is moderated by how much you like dogs and if you’re allergic to dogs.

Since GRE and GPA are the most important predictors of admission, the authors split up applicants by GRE and GPA and computed the fraction admitted in each group. If the fraction from students in one group is higher than the overall admission rate, then they say that factor makes a student “stand out” in admissions. They also analyzed for mediating and moderating relationships, and looked into other factors: most selective vs. less selective institutions, women vs. men (with a note there was no non-binary data available), marginalized race (Black, Latinx, Multiracial, Native) vs. not marginalized (white/Asian).

A Confluence of Factors

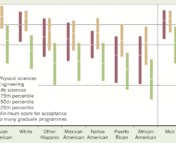

Looking at Figure 1, the first trend that stands out is that most admitted students have a high GRE score and a high GPA, with very few having a high GRE and low GPA, leading to the conclusion that “more applicants could be penalized for having a low physics GRE score despite a high GPA than could benefit from a high physics GRE score despite a low GPA.”

Also, applicants from smaller or less selective institutions (those that would need something to make them “stand out”) don’t get as high of GRE scores on average to begin with, shown in Table 1.

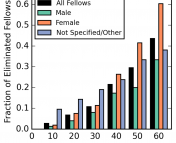

The next question to ask is: how are these probabilities of admission affected by an applicant’s undergraduate institution, gender, and race? Looking at undergraduate size in Figure 2, exceptionally high GRE scores (above 900) don’t seem to have any benefit over high GRE scores (800 to 900) for students from smaller programs.

Looking at undergraduate selectivity, the physics GRE again does not seem to counteract any potential biases towards applicants from less selective institutions, shown in Figure 3.

Race and gender show more interesting trends. Women are admitted at higher rates than men with similar scores, and Black/Latinx/Multiracial/Native applicants are also admitted at higher rates than white or Asian applicants. The authors speculate that this is because women and racial minorities tend to score lower than white men on the Physics GRE (due to the biases of the exam), so a high GRE score could make these applicants stand out in admissions. However, the authors also note that these results should be “interpreted with caution” about the idea that the PGRE might help applicants stand out, because these institutions were also trying to increase their “diversity” during these admissions years. Additionally, even if the Physics GRE could help marginalized applicants stand out, this potential benefit could be vastly outweighed by known scoring discrepancies and biases of the exam, as well as other factors (such as the cost of these exams as a barrier to entry).

Lastly, let’s take a look at the mediating and moderating relationships between all these factors. The authors didn’t find any evidence of moderation—that is, the relationship between GPA and admission doesn’t change with Physics GRE score. If the “standing out” myth was true and high PGRE scores helped low-GPA applicants, they’d expect to see a moderating relationship here.

There were a few mediating relationships though. Undergrad selectivity and admission was partly mediated by PGRE, and undergrad size and admission were partly mediated by PGRE (but not GPA). Physics GRE scores do seem to explain some differences in admission based on where a student went to undergrad, so it is possible for a student to “stand out” if they get a high PGRE score. But, if we look back at the bins of applicants in Figures 1-3 that show how few students have a low GPA and high GRE, we see this doesn’t seem to be what happens in practice.

Physics GRE score and admission also show a marginal mediation with undergrad selectivity, which the authors identify as a point for further investigation. If future work shows there is a moderating effect from PGRE on the relationship between undergrad selectivity and admission, this would challenge the idea that the PGRE “levels the playing field” for applicants.

Takeaways and Recommendations

Overall, this study shows (yet again) that the PGRE doesn’t do much (if anything) in the way of increasing equity in admissions. Their results show that for middle-of-the-pack PGRE scores, the selectivity and size of where someone went to undergrad doesn’t give them an advantage. However, for the highest PGRE scores, students from smaller or less selective institutions seem to be at a disadvantage. This work also dispels the myth that the PGRE can help applicants stand out, not only finding no evidence to support that claim, but even finding the opposite: a low PGRE might penalize applicants instead of a high score helping them. The authors of this paper suggest against using the PGRE to identify applicants who might be missed with other admissions metrics, since these claims aren’t backed by evidence, adding another nail in the coffin of standardized graduate admissions exams.

“Beyond astro-ph” articles are not necessarily intended to be representative of the views of the entire Astrobites collaboration, nor do they represent the views of the AAS or all astronomers. While AAS supports Astrobites, Astrobites is editorially independent and content that appears on Astrobites is not reviewed or approved by the AAS.

Disclosure: The author of this Astrobite has previously collaborated with Nicholas Young as part of PERBites, part of the “Science Bites” family of organizations. Check out more physics education research on their site!

Astrobite edited by: Haley Wahl

Featured image credit: Young and Caballero 2020