This guest post was written by Martin Sevcik, a student journalist and first-year Labor Studies undergraduate at UCLA. Martin wrote this article as part of a science journalism class taught by Astrobites author Briley Lewis.

Title: Using Bayesian Deep Learning to infer Planet Mass from Gaps in Protoplanetary Disks

Authors: Sayantan Auddy, Ramit Dey, Min-Kai Lin, Daniel Carrera, and Jacob B. Simon

First author institution: Iowa State University, USA

Status: Submitted to The Astrophysical Journal [open access]

One of the greatest mysteries on planet Earth is the origin of life: exactly when, where, and how did the first organisms emerge? Of course, the opportunity to observe this process on Earth disappeared long ago. But if we can find exoplanets – planets circling other stars – early in their formation process, we may better understand how Earth-like planets form. This would give us key insights into Earth’s early stages, helping us better understand the conditions in which life first emerged.

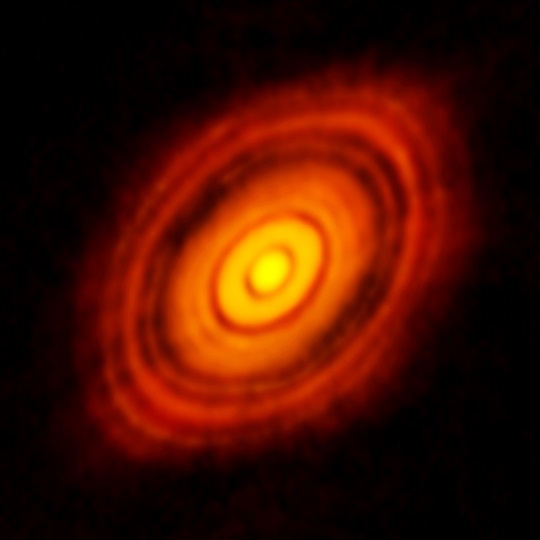

Luckily, we know just where to look for these young exoplanets. Stars form in the center of collapsing dust clouds, where the particles are hot and dense enough to begin fusion. Once the star forms, a disk of leftover dust begins circling around it – like a spinning record. This is known as a “protoplanetary disk,” and this is where planets begin forming.

To help investigate these young planets, an international team of researchers have developed a machine learning algorithm that analyzes these protoplanetary disks. In their most recent paper, the researchers have introduced innovations to their algorithm which increase the precision of its work.

Clear images of protoplanetary disks first emerged in 2014, from which astronomers could identify “rings” which indicated planetary orbits. But that was all they could identify from the human eye alone. If they wanted to learn more – such as planet mass – they would need to develop more complex methodologies.

The researchers turned to neural networks – specifically deep learning algorithms – to solve this problem. These algorithms are structured like a brain! The network receives some inputs, then passes that information through layers of “nodes” – i.e. brain cells – which each interact with the data in some way. After passing through every layer, it outputs a value.

After creating a neural network, programmers need to “train” it. They develop a large number of practice inputs for the program, then determine each input’s appropriate outcome. They then feed these inputs into the network one-by-one and evaluate the accuracy of each output. In this case, the team of researchers trained neural networks to estimate planet masses in protoplanetary disks. They ran simulations of protoplanetary disks, then fed the characteristics of the completed simulations into their neural network. Because the researchers had created every detail of the simulations themselves, they knew what the correct planet masses were – and therefore what estimates the network should produce.

If the network ever produces an incorrect output, programmers tweak the program’s nodes and try the input again, which leads to better “learning” by the “machine.” Programmers tweak the network until the program consistently produces the correct outputs for the entire training data set. After it is trained, an effective neural network can be exposed to brand-new data and, based on its training, reach accurate conclusions.

To prove the accuracy of their trained network, the researchers turned to real-life protoplanetary disks. These particular disks – HL Tau and AS 209 – are well-documented, and multiple other teams have generated mass estimates for their planets using specialized simulations. After feeding the program this new, real-life input data, it outputs similar masses to other studies, proving the network’s accuracy.

This most recent network is particularly innovative because it employs a relatively new kind of neural network. Typically, a neural network only produces discrete data points. This most recent AI, employing a system known as a Bayesian neural network, returns a confidence interval instead. This interval conveys a specific mass estimate alongside a range of potential high-confidence values around it. The true planet mass is likely in this range of inferred planet masses, given the data!

This interval is valuable because it informs researchers about how accurate the program’s output is. As the confidence interval gets larger, the specific estimate becomes less certain. In this way, the new Bayesian neural network can provide researchers with more information about their findings, allowing them to draw more precise conclusions.

Ultimately, the researchers stress that this is a proof-of-concept of Bayesian networks; within the submitted paper, they only test the program on protoplanetary disks with established planetary masses. However, they believe that this most recent work effectively demonstrates the power of Bayesian networks, encouraging other astronomers to build similar networks in their own research.

Networks like these allow astronomers to effectively study young planets, telling us what Earth might have looked like 5 billion years ago. Until we can collect deeper data for a diverse set of planets (with a wide variation in planet masses) and protoplanetary systems, we can rely on these emerging machine learning systems to learn more about them with our limited existing data.

Edited by: Gourav Khullar

Featured Image: ALMA