Title: The Variability of Crater Identification Among Expert and Community Crater Analysts

Authors: Stuart J. Robbins and others

First Author’s institution: University of Colorado at Boulder

Status: Published in Icarus

“Citizen scientist” projects have popped up all over the Internet in recent years. Here’s Wikipedia’s list, and here’s our astro-specific list. These projects usually tackle complex visual tasks like mapping neurons, or classifying galaxies (a project we’ve discussed before).

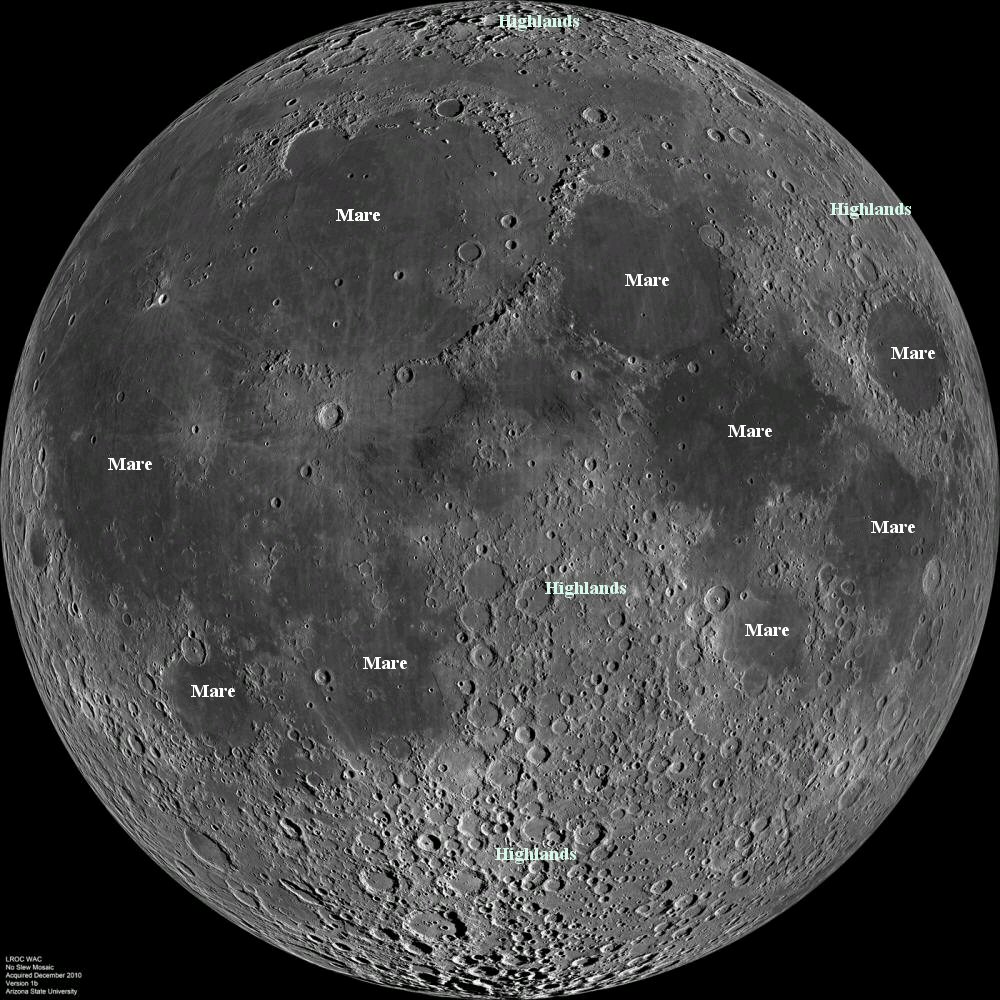

Fig. 1: The near side of the moon, a mosaic of images captured by the Lunar Reconnaissance Orbiter. Several mare and highlands are marked. The maria (Latin for “seas”, which is what early astronomers actually thought they were) were wiped as clean as a first-period chalkboard by lava flows some 3 billion years ago. (source: NASA/GFSC/ASU)

This is hard work. Not with all the professional scientists in the world could we achieve some of these tasks, not even with their grad students! But by asking for help from an army of untrained volunteers, scientists get much more data, and volunteers get to contribute to fundamental research and explore the beautiful patterns and eccentricities of nature.

The Moon Mappers project asks volunteers to identify craters on the Moon. One use for this work is to relatively date nearby surfaces. Newer surfaces, recently leveled by lava flows or tectonic activity, have had less time to accumulate craters. For example the crater-saturated highlands on the Moon are older than the less-cratered maria. Another use for this work is to calibrate models used to determine the bombardment history of the Moon. For this task, scientists need a distribution of crater sizes on the real lunar surface.

So how good are the volunteer Moon Mappers at characterizing crater densities and size distributions? For that matter how good are the experts?

Today’s study attempts to answer these questions by having a group of experts analyze images of the Moon from the Lunar Reconnaissance Orbiter Camera. Eight experts participated in the study, analyzing two images. The first image captured a variety of terrain types (both mare and highlands). The second image had already been scoured by Moon Mappers volunteers.

Results

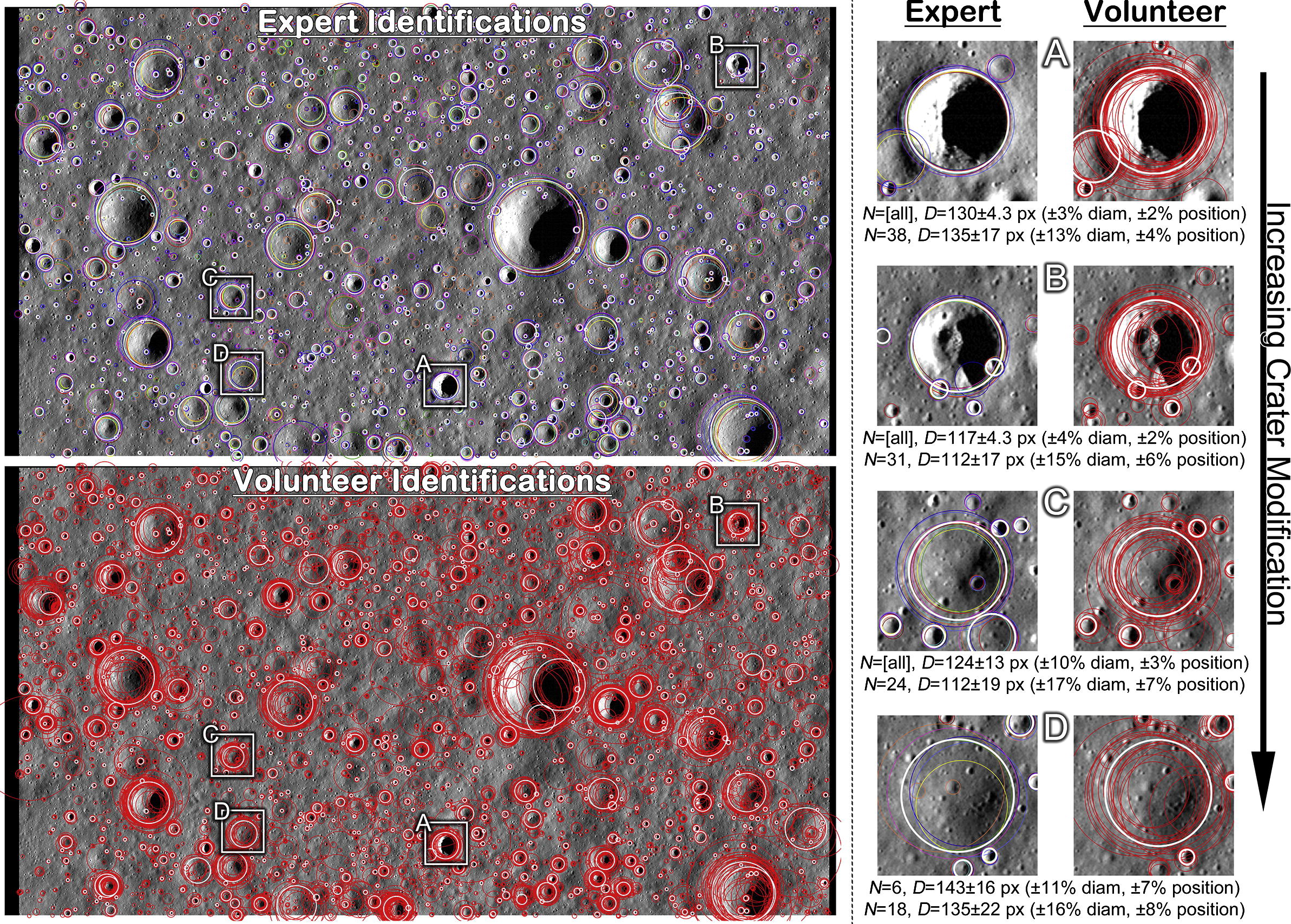

Fig. 2: One of the two images of the lunar surface used in this study. The top panel on the left shows the experts’ clusters, a different color for each expert. The bottom panel on the left shows volunteers’ clusters, all in red. The zoomed-in images to the right show a handful of craters of varying degrees of degradation. As expected, there is a larger spread visible for the volunteers’ clusters. (source: Robbins et al.)

The authors find a 10%-35% disagreement between experts on the number of craters of a given size. The lunar highlands yield the greatest dispersion: they are old and have many degraded features. The mare regions, where the craters are young and well-preserved, yield more consistent counts.

To examine how well analysts agree on the size and location of a given crater, the authors employ a clustering algorithm. To find a cluster the algorithm searches the datasets for craters within some distance threshold of others. The distance threshold is scaled by crater diameter so that, for example, if two analysts marked craters with diameters of ~10 px, and centers 15 px apart, these are considered unique. But if they both marked craters with diameters of ~100 px, and centers 15 px apart, these are considered the same. A final catalog is compiled by excluding the ‘craters’ that only a few analysts found. See Fig. 2 to the right for an example of crater clusters from the experts (top panel) and the volunteers (bottom panel).

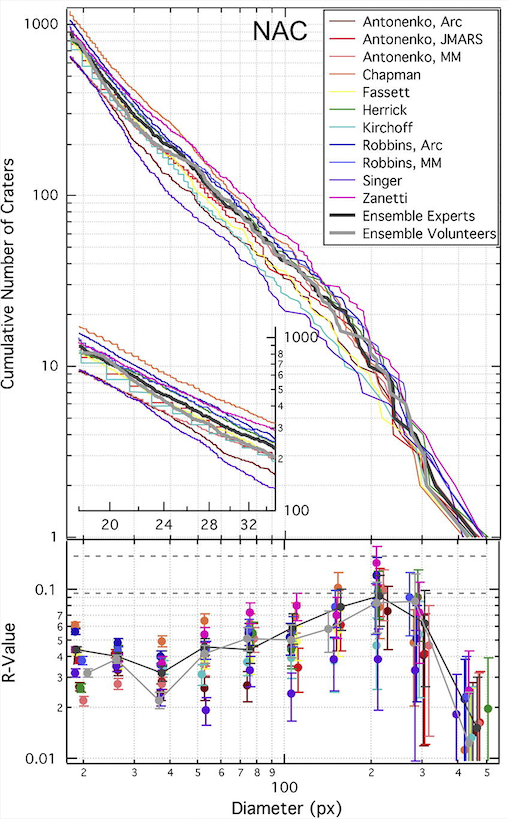

Fig. 3: The top panel shows the number of craters larger than a given diameter (horizontal axis), as determined by different analysts. A different color represents each analyst, and in some cases the same analyst using several different crater-counting techniques. The light gray line shows the catalog generated by clustering the volunteers’ datasets. It falls well-within the variations between experts. The bottom panel shows relative deviations from a power-law distribution. (source: Robbins et al.)

The authors find that the experts are in better agreement than the volunteers for any given crater’s diameter and location. This isn’t surprising. The experts have seen many more craters, in many different lighting conditions. And the experts used their own software tools, allowing them to zoom in and change contrast in the image. The Moon Mappers web-based interface is much less powerful.

Finally, the authors find that the size distributions computed from the volunteers’ clustered dataset falls well within the range of expert analysts’ size distributions. Fig. 3 demonstrates this.

In conclusion, the analysis of crater size distributions on a given surface can be done as accurately by a handful of volunteers as by a handful of experts. Furthermore, ages based on counting craters are almost always reported with underestimated errors: they don’t take into account the inherent variation amongst analysts. Properly accounting for errors of this type give uncertainties of a few hundred million years for surface ages of a few billion years. However, this study shows that the uncertainty is smaller when a group of analysts contribute to the count.

Consider becoming a Moon Mapper, Vesta Mapper, or Mercury Mapper yourself!

Trackbacks/Pingbacks