- Title: A 2.4% Determination of the Local Value of the Hubble Constant

- Authors: A. D. Reiss, L. M. Macri, S. L . Hoffmann et al.

- First author’s institution: Department of Physics and Astronomy, Johns Hopkins University, Baltimore, MD

- Status: submitted to The Astrophysical Journal

Astrophysicists love an ol’-fashioned conflict. If everything is rosy and well between theory and observations, it’s a peaceful world, yes. But this scenario leaves no room for scrutiny, critique, innovation or resolution. It’s these resolutions that change the way we see the universe. It happened with general relativity, it happened with the expansion of the universe, and now it has happened again.

Let me set the story up. Vesto Slipher observes 46 galaxy spectra in 1927. Two years later, Edwin Hubble uses the spectra to calculate how much the spectral lines shifted by, and subsequently how fast these are moving away from us. Hubble discovers that the further these galaxies are, the faster they are moving away. He is successful in interpreting this change in velocity using a linear relationship, that is now called Hubble’s law, and is one of the biggest proofs that the Big Bang has occurred in the past. The ‘Hubble’s constant’ is calculated to be 500 km/s per Megaparsec.

Fast forward a few decades – it’s the year 1990. Estimates of the ‘Hubble’s Constant’ are still not accurate and precise, primarily because establishing that constant needs a large set of distant astrophysical objects with precise measurements of their distance and speeds. The constant itself is an interesting beast, since the accepted values in the community shift between 50 km/s/Mpc (i.e. for every 10^19 kilometers, the speeds of galaxies that we observe change by 50 km/s) and 90 km/s/Mpc (the speeds change by 90 km/s).

Enter the Lambda-CDM model.

LCDM is a cosmological model in the universe consisting of Dark Energy and Cold Dark Matter.The year 1998 brings with it the discovery that two great indicators of distance, Type 1a supernovae (which are a type of exploding stars) and Cepheid variables (a type of pulsating star), are less bright than we expect based on the velocities we measure. Using Hubble’s law, the only possibility that makes sense is that they are moving faster than we think they are. Ladies and Gentlemen, the accelerated expansion of the universe.

It is extremely crucial to note why Type 1a supernovae are great distance indicators. These supernovae form from a standardized process called thermonuclear emission from white dwarfs, and have the same standardized spectra that allow us to make estimates of how bright they are precisely. Moreover, the further something is, the fainter it is; a natural outcome of the inverse square law. This makes these supernovae a great tool to measure distances using their luminosities.

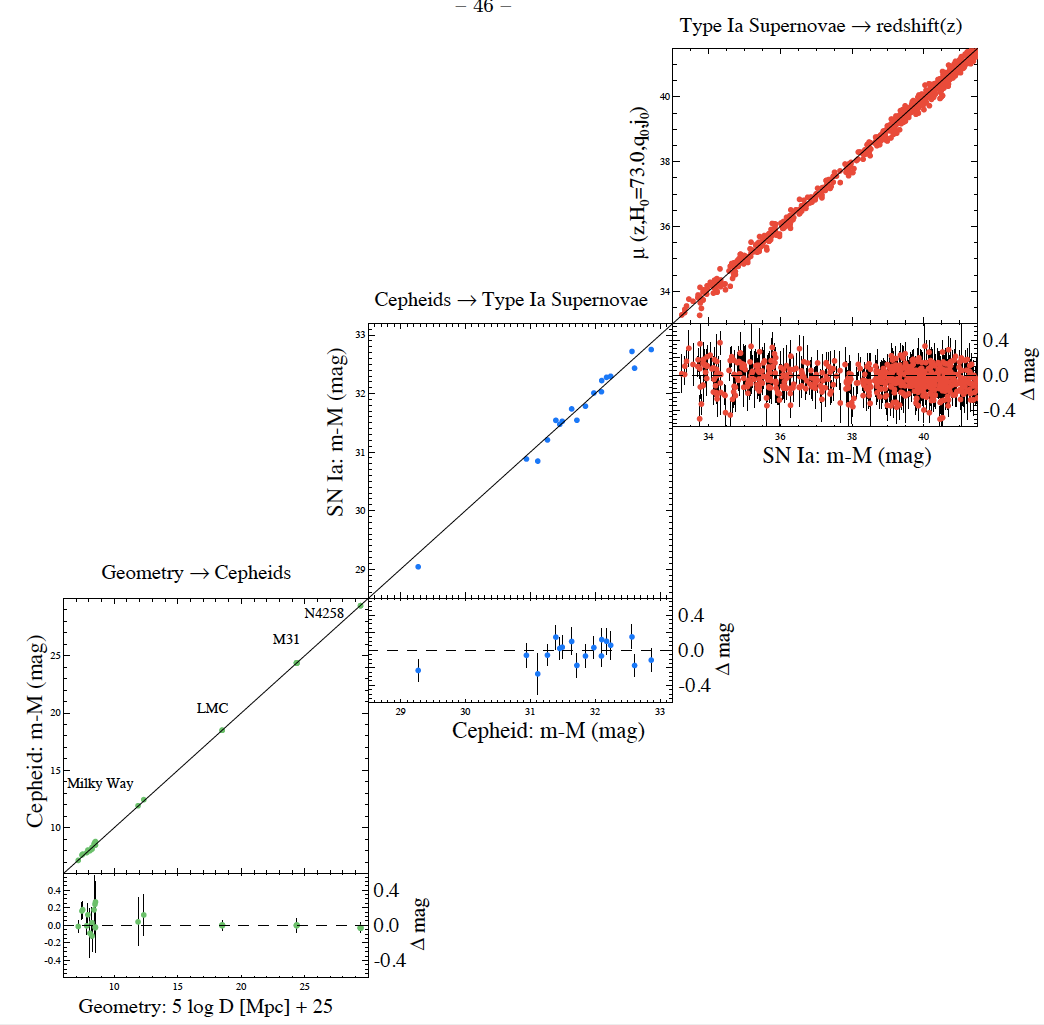

Back to the present. The paper being discussed here is a treatise on renewed precise calculations of the Hubble’s constant using Cepheid variables in the same galaxies as some recent Type 1a supernovae. The objective of this work is twofold:

– To reduce uncertainties and make distance measurements more precise for the observed systems, using several analytical techniques.

– To characterize the Hubble’s constant and compare its value in the local universe where the observed objects lie, to the value measured using other probes.

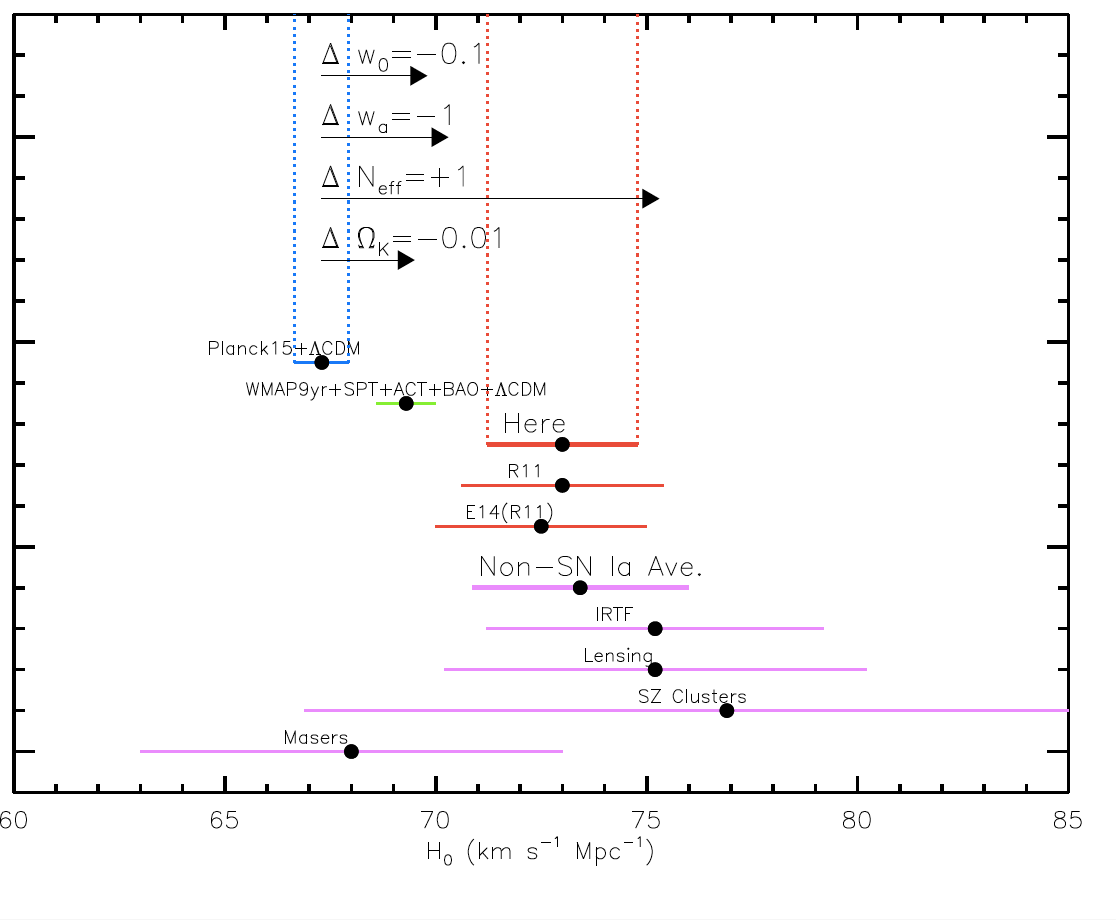

By measuring the distance of these objects precisely, the team has been successful in extrapolating how fast these objects move, and it is a matter of great scientific achievement that the errors on distance measurements has been reduced to such an extent through their analyses. The results are, to say the least, very intriguing. The paper claims a value of the local H0 = 73.85 ± 1.97 km s−1 Mpc−1 to a precision of 2.4%.

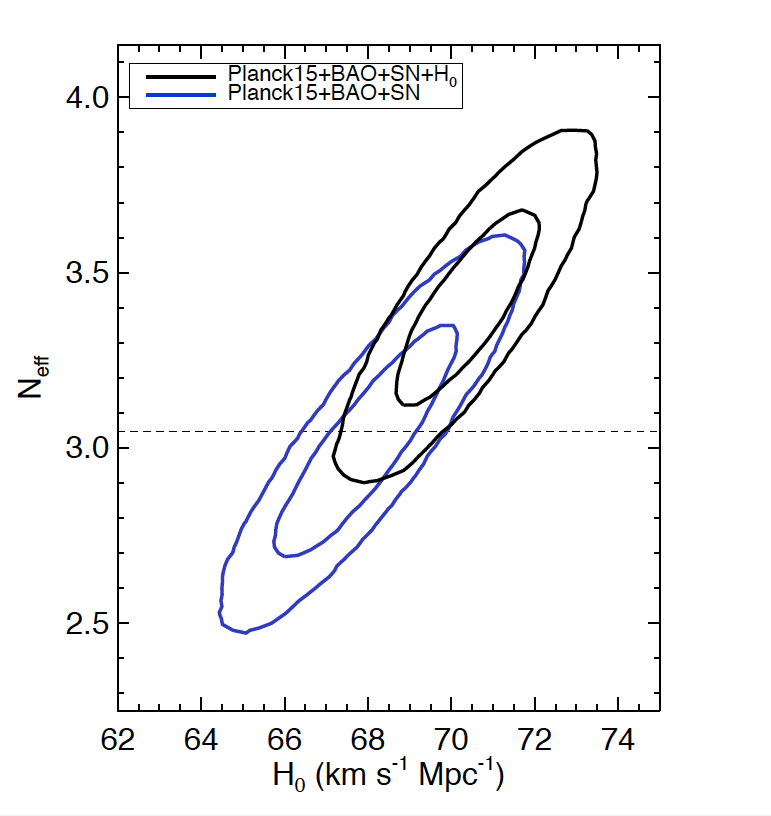

The problem is this. The Supernova H0 is ~ 6 km s−1 Mpc−1 more than that claimed by the Planck collaboration, which is a team known for its precise cosmological measurements from the Cosmic Microwave Background (CMB). The CMB is a primordial tool from the Big Bang, results from which are now colliding head-on with the latest Supernova measurements, a tool from the local neighborhood of the universe. The comparison has been made by extrapolating the evolution of H0 from the big bang to the present day, and the conflict is seen in the figures above. The authors of the paper suggest that in the Planck analysis, changing a parameter called Neff – the net number of species contributing to dark energy post the big bang – could bring the value of H0 closer to the one found in the local universe. It is important to note that the values of several cosmological parameters is dependent on H0.

Whether this conflict for LCDM is good or bad news, is too early a call to make. But this is making astrophysicists very excited, indeed.

Fast Radio Bursts may be related to resonators created by supernova remnants

“The ‘Hubble’s constant’ is calculated to be 500 km/s per Megaparsec.”

” the accepted values in the community shift between 50 km/s/Mpc (i.e. for every 10^19 kilometers, the speeds of galaxies that we observe change by 50 km/s) and 90 km/s/Mpc (the speeds change by 90 km/s).”

I’m confused.

The 500km/sec/Mpc value was that obtained originally by Edwin Hubble. Its much higher than the currently accepted value due to errors in his distance calibration methods

Thanks Jack. Most helpful.

Sorry for the delay in thanking you – I’ve only just now had the notification of your post come thru – a little belated.

Hmm. 1) “Vesto Slipher observes 46 galaxy spectra in 1927″ – the first several spectra he got came from 1910s (1913 on more precisely)”. 2) “Edwin Hubble uses the spectra to calculate […] how fast these are moving away from us.” – Slipher wasn’t stupid and calculated these velocities himself… What Hubble did was to estimate _distances_ to these redshifted “nebulae” to get the distance-velocity relation. 3) The problem isn’t about “local vs. distant” H_0 determinations, because low H_0 values are also obtained from BAOs, some of which (6dF…) are at very low redshifts (z~0.1)