Title: Probabilistic Catalogs For Crowded Stellar Fields

Authors: B. Brewer, D. Foreman-Mackey and D. Hogg

First Author’s Institution: Department of Statistics, The University of Auckland

Status: Published in the Astronomical Journal, open access

What is a catalog? As far as many of the latest and largest astronomical surveys are concerned (SDSS, 2MASS, USNO-B, etc.), a catalog is essentially a list of sources that includes measurements of a variety of properties, such as source positions and fluxes. These catalogs are undeniably useful, not least because they make the sky searchable and facilitate large statistical studies of whole populations of different objects. But catalogs are not the fundamental data product of a survey, since telescopes make images, not catalogs.

Catalogs are made by people (or perhaps rather the algorithms they invent), who have to make hard decisions about the specifics of raw image calibration and source detection. In this process of catalog-making, some potentially valuable information will almost inevitably get lost. Reluctant to accept this, the authors of today’s paper experiment with a novel representation of the imaging data, a probabilistic catalog that encapsulates more of the available information and thus enables more precise tests of models against the underlying source population.

Probabilistic Catalogs

Instead of trying to detect sources one by one, the idea is to infer the source positions, fluxes and all other relevant properties from the imaging data, treating them as unknown parameters in the framework of Bayesian statistics. That may sound like a hard problem, and it is! But if we could, in this way, find sets of parameters (source positions, fluxes, etc.) to generate mock images that reproduce the observed image well, we would have found plausible catalogs that reflect our knowledge about the presence and properties of sources in the image.

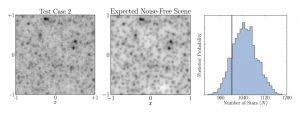

Figure 1 Left: Simulated test image of a crowded field containing 1000 stars. Middle: Summary mock image generated from the inferred posterior distribution for the test image. Right: The posterior probability distribution for the number of sources in the test image. (Figures taken from the paper.)

The main task is to come up with a generative model for the observed image, by formulating a prescription to actually generate realistic mock images (see Figure 1). For simplicity, the authors only consider simulated images in which all expected sources are point sources, such as stars. They also assume that a model of the point spread function (PSF) is known, but it is allowed to have some extra parameters. Noise has to be modeled and added to the mock image as well, to account for the existing measurement noise.

In the terms of Bayesian inference, comparing a mock image to the observed image permits us to calculate the likelihood, which is the probability of obtaining the observations given the parameters. To obtain the necessary probability distribution for the parameters (given the observations), called the posterior probability, we have to factor in our initial knowledge by assigning the parameters a prior probability. For instance, the position of a star must be inside the image for it to be detectable, and unless we have reason to expect the stars to be clustered, the most appropriate choice would be to assume that they are uniformly scattered across the image. If we could justify some explicit model to describe the stellar density (e.g. in a cluster environment), the parameters of this model could in principle be inferred too.

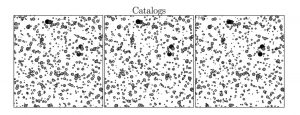

Having written down the likelihood and the full prior probability distribution, we can obtain samples from the posterior distribution numerically using MCMC methods, even if these probabilities are fairly complicated functions. Each of the posterior samples is a set of parameters that provides a star list representing a realization of the underlying source population, as constrained by the observations. Together, these samples can be thought of as a “probabilistic” catalog, where similarities between them indicate features that are credible and differences reveal features that are more uncertain (see Figure 2).

Figure 2 Three example star lists, which are samples drawn from the inferred posterior distribution (i.e. the probabilistic catalog) for the test image shown in Figure 1. (Figure taken from the paper.)

Test Cases

In a high-density, crowded stellar field, multiple sources can happen to appear so close to each other on the sky that their images partially overlap, so that even at the highest achievable resolution it can be difficult to distinguish individual sources and to make unbiased measurements of their properties. In such a case, a probabilistic catalog has the advantage of not possessing a fixed number of sources, but making it possible to infer this number and to estimate its uncertainty. A high fraction of catalog samples showing two stars blending together would imply a high confidence in the presence of two stars instead of one (see Figures 1 and 2).

The authors test their method on two simulated images with different stellar densities and compare the results to star lists produced with the Source Extractor software, which is a widely used and standard tool. In particular in the higher-density case, Source Extractor detects significantly less many of the fainter stars planted in the image, due to crowding. But, perhaps more importantly, by using a probabilistic catalog, the uncertainty in the number of sources (and also all other parameters) could be accurately propagated into further analysis or the final conclusions.

In practice, quite a few difficulties still need to be figured out. The Bayesian method is numerically tricky in several aspects and computationally expensive, and it needs to be more thoroughly tested against other standard tools. More challenges lie in the application to real data, for example with respect to taking stellar motions or variations of the PSF into account. However, the authors have demonstrated the feasibility of constructing a probabilistic catalog for small crowded stellar fields, and the prospects of having such an information-rich catalog at one’s disposal look exciting!

The toughest test of any star catalogue is the observation of a stellar occultation by an asteroid. Better catalogues on the way…? yay!