Title: Sex-Disaggregated Systematics in Canadian Time Allocation Committee Telescope Proposal Reviews

Authors: Kristine Spekkens, Nicholas Cofie, and Dennis Crabtree

First Author’s Institutions: Queen’s University, Royal Military College of Canada

Status: Submitted to Proceedings for SPIE Astronomical Telescopes + Instrumentation, open access on arXiv

If you’re a woman, you probably wouldn’t be surprised to hear that men tend to receive more paper citations or better scores on proposals than women. As women in STEM, we’re outnumbered by men and often faced with prejudices and biases in our fields (check out these posts for previous Astrobites coverage of gender bias). So it’s probably not surprising either that these biases show up in the scores that a Time Allocation Committee (TAC) awards to competing telescope proposals. In fact, there have already been several studies examining this very phenomenon in proposals to the Hubble Space Telescope, European Southern Observatory, and National Radio Astronomy Observatory. Today’s authors systematically evaluate the success of proposals written by men vs. women for Canadian observatories, showing that in the period from 2012-2016 proposals written by women were rated significantly worse than those written by men.

The authors of today’s paper obtained their data from the National Research Council (NRC) of Canada for ten recent telescope proposal cycles. They divided the proposals according to whether the Principal Investigator was male or female (noting that gender identity is not binary, however this information was not available to the authors). Of the 751 proposals analyzed, 72.7% were submitted by men while 27.3% were submitted by women. These numbers change slightly when the sample is divided into faculty PIs (78.5/21.5) vs. non-faculty PIs (69.8/39.2).

Before getting into the meat of this analysis, let’s first summarize the lengthy proposal review process. To start, they go to a committee of 12 academics (the TAC) depending on whether the proposed science was Galactic or Extragalactic, who can solicit feedback from other outside parties. The TAC then ranks each proposal from 1 to 6 where, counterintuitively, a lower score indicates a better proposal. Next, the normalized scores from both the Galactic and Extragalactic panels are combined and considered alongside instrument or weather constraints to determine which proposals ultimately get the precious observing time. Thus, the proposal scores are predictive of the success of each proposal. An important (and perhaps surprising) aspect of the review process is that the TAC has full access to the names of the PI and Co-Is on each proposal.

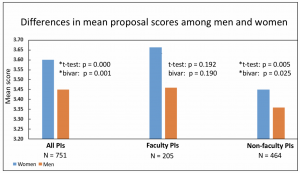

Using a variety of statistical tests [1], Spekkens et al. find that the mean proposal scores (MPSs) in their full ten-cycle sample differ significantly between men and women, with men receiving the better scores overall. The statistically significant difference disappears for the faculty subsample, though the authors note that male faculty still tended to receive better scores. Figure 1 shows the MPSs for the full sample, the faculty-only subsample, and the non-faculty subsample (remember that a lower score is better). Notably, the authors’ results agree regardless of which method they used to test the significance of the MPS difference, suggesting a robust analysis and result.

Figure 1. The mean telescope proposal scores for men vs. women. The left chart shows the results for the full sample, while the middle and right charts break the full sample into faculty and non-faculty PIs, respectively. A lower score indicates a more highly ranked proposal. The difference between men’s and women’s scores is statistically significant for both the full sample and the non-faculty subsample (denoted by asterisks). Figure 1 in the paper.

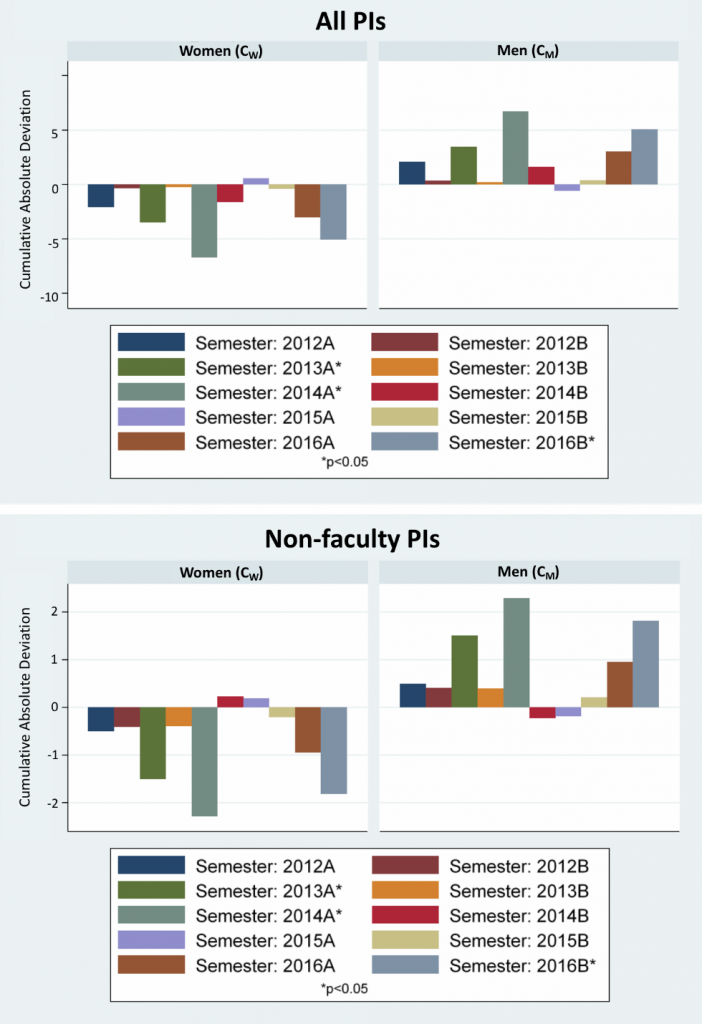

However, there are several other variables aside from gender that could contribute to the overall score. Spekkens et al. considered factors such as the specific telescope requested, the panel that reviewed the proposal, the cycle the proposal was submitted in, or (for the faculty PIs) the year the PI obtained their PhD. Figure 2 shows the results of this analysis when considering the cycle the proposal was submitted in. For all but one cycle (or two cycles, for non-faculty PIs) men’s proposals fare better than women’s. The difference was statistically significant in three of the ten cycles for both the full sample and the non-faculty subsample. While the scientific review panel was also a significant predictor of proposal success for the non-faculty subsample, the PI’s gender was the primary predictor of success regardless of the sample considered.

Figure 2. The difference in mean proposal score between men and women by proposal cycle. The top panel shows the full sample while the bottom panel shows non-faculty PIs. The cumulative absolute deviation (y-axis) represents the total difference of women’s vs. men’s scores from the overall mean. Men’s proposals ranked almost universally better, with significant differences occurring in 2013A, 2014A, and 2016B. Figure 5 in paper.

Why might men’s proposals consistently rank higher than women’s? Ferdinando Patat of the ESO gender bias study hypothesized that men obtain consistently better scores because they have a higher average seniority. Today’s paper disagrees, because the most significant differences observed in the Canadian TAC sample appear within the non-faculty PI subsample, where there is little difference in seniority among PIs. Spekkens et al. thus propose that the MPS differences between men and women arises from implicit social cognition, unconscious influences that affect a person’s behavior. Notably, if implicit social cognition is indeed the cause, simply changing the demographics of the TAC review committees won’t change anything! Numerous studies have shown that both men and women will consistently rate men higher, even if identical work is performed by women.

The only approach that has improved the observed gender disparity regardless of field or occupation, and that has been now been adopted by both the Hubble and Canadian TACs, is blinding the review process (shown here, here, and even for orchestras here). That is, removing identifying information before review has been proven to increase female representation. With a blinded approach to telescope proposal review, neither men nor women go into the process with any preconceived notions about the PI. Therefore, both men and women can provide a much more unbiased assessment of the scientific and technical merit of a proposal.

While women have traditionally been at a significant disadvantage simply due to being women, peer and proposal reviewers have started taking a blinded approach in an attempt to mitigate any implicit bias that might creep in. Many European journals as well as the Hubble TAC (and the Canadian TAC, starting in 2017B!) now remove identifying information of authors and PIs/Co-Is. The gender disparity problem observed in journals and proposals is far from being solved, but with a blind review process there’s hope that the gap might begin to close. After all, the first step to a solution is recognizing there’s a problem!

—

[1] The statistical tests used by the authors were the classical t test, a bivariate regression (when assessing PI gender as the only predictor of MPS), and a multivariate regression (when assessing PI gender as a predictor of MPS while controlling for other variables).

—

If you’d like to test whether you hold implicitly biased world-views, check out Harvard’s Implicit Bias Test. I personally found it very enlightening.

Thank you for taking the time to write this piece, it was an interesting read.

However, I am concerned with the fact that you recommend readers to take IAT tests, given that there is controversy regarding the accuracy and validity of such tests.

Also, it seems that the authors of the paper haven’t done a multivariable analyses of this gap, they only used biological sex, and ignored other traits such as openness, agreeableness, conscientiousness among many, which is also worrying to me.

I do hope that by blinding the process this gap is reduced.

Hi Denny, thank you for your comment! Regarding the Harvard tests, I don’t recommend that readers take the results as the end-all-be-all. I simply found it interesting that my personal responses did tend to align overall with societal stereotypes more than not, regardless of my conscious opinions on the issues. The authors also noted the Harvard tests in their paper. Regarding the second part of your comment, I simply found the study interesting and was in no way involved, though I do think those traits could be interesting to study if there was a way to score or quantify them.

Hello,

I am aware that astrobites authors are rarely involved with the paper they are reviewing, and I apologize for inadvertently implying otherwise.

Regarding the traits, I’ve seen papers use them to analyse the wage gap among men and women, so it seems they are quantifiable.

One of the debates around the IAT is that it might measures associations that a person has picked up from cultural knowledge, and not associations that actually reside within the person. The counterargument to this point is that the associations from cultural knowledge can influence behavior. This might be the case with your results.

Regardless, this isn’t the place to debate about IATs, and neither of us are experts in the matter.

Thank you for your response.

Hope to see more of your writing soon.