Title: Astronomical Image Quality Prediction based on Environmental and Telescope Operating Conditions

Authors: Sankalp Gilda, Yuan-Sen Ting, Kanoa Withington, Matthew Wilson, Simon Prunet, William Mahoney, Sebastien Fabbro, Stark C. Draper, Andrew Sheinis

First author’s institution: University of Florida, Gainesville, FL, USA

Status: Available on arXiv (open access)

There are a few major observatories in the world, and lots of science to do. In order to observe with one of the big telescopes, astronomers have to propose for time, explaining what they want to do and why it’s important. This is a pretty competitive process, and scheduling observations is a hard problem since these observatories are oversubscribed. Telescope time is expensive, too—around $25,000 USD a night or more, depending on which telescope you’re using!

But what if we could use machine learning to help with this problem of scheduling, making observations shorter or more efficient so we could get through more of them in a night? Machine learning has already been used in so many areas of astronomy (identifying FRBs, craters, turbulence, variable stars, and much more), so it makes sense to try to apply it to this complex problem.

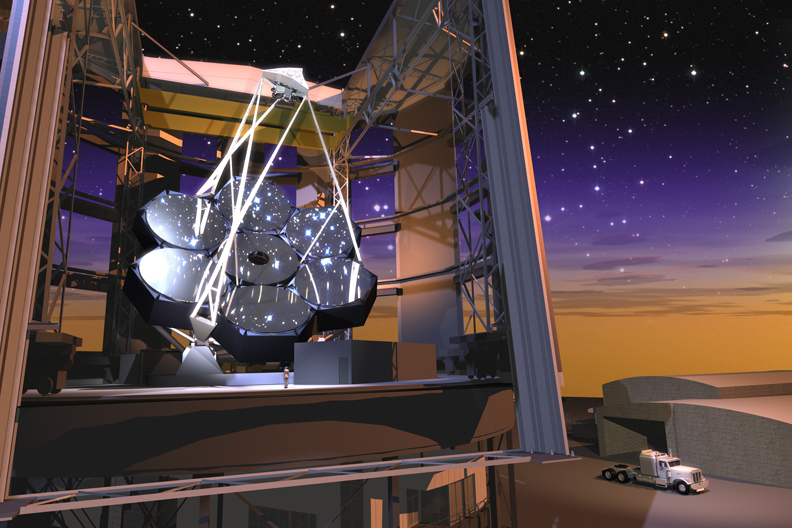

Today’s paper begins exploring this idea of using machine learning to optimize observations. The authors focus on the Canada-France-Hawaii-Telescope (CFHT) on Mauna Kea, since they have a repository of data stretching all the way back to 1979 they can use to train their neural net. There are so many factors changing in an observatory dome as it observes a target: the moving parts of the mechanisms in the dome and telescope, the ambient temperature in the dome and outside, wind, atmospheric turbulence, atmospheric pressure, and much more. CFHT in particular has vents to help deal with heat created or trapped in the dome; they open these vents to release hot air as needed (Figure 1 shows a diagram of the vents on the telescope). For this study, the authors start by looking at which vents on the telescope were open or closed, limiting their scope to only this one variable to keep things simple for now.

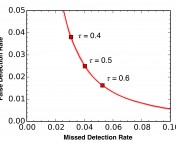

The goal of this study is to use machine learning to optimize the configuration of these vents, improving image quality (and thus also lowering exposure time, since less time is needed to reach a given signal-to-noise ratio if there’s less noise to deal with). The authors first looked at the typical configuration used for the vents in their data; it appears that they were usually either all closed, or all open (first panel of Figure 2). Their neural net was able to predict the image quality based on this information (whether or not the vents are open, keeping all other factors constant) to 0.07 arcsecond accuracy. By allowing the neural net to then optimize the vent configuration, they found that by combining open and closed vents (second panel of Figure 2), they could improve image quality by 0.05 to 0.2 arcseconds (a 10% improvement!).

Their 10% improvement in image quality leads to something really important—a 10-15% reduction in exposure time (how long they have to collect light from a source) and a one million dollars per year savings on telescope time costs! This is an exciting first step in optimizing telescope scheduling and operations, but there is still much work to do. Vents were only one piece of this much larger puzzle, but with all the legacy data to work with and these positive first results, it looks like machine learning might be a promising path forward!

Astrobite edited by: Huei Sears

Featured image credit: CFHT